Evolutionary & Hybrid AI

a VUB AI Lab research team

Mission

We aim to build truly intelligent systems that are able to interact with and reason about their native environment in order to solve an open-ended set of tasks. Our systems are deeply inspired by evolutionary principles such as self-organisation, selection and emergent functionality, and are therefore adaptive by design. We adopt a hybrid approach that integrates symbolic and subsymbolic AI techniques, combining their strengths to achieve general, accurate and interpretable models. We focus in particular on tasks that require human language-like communication, involving advanced perception, reasoning and learning skills. We investigate fundamental research questions that have a tight connection to real-world problems.Expertise

The EHAI team has extensive and unique expertise in the domains of emergent communication and computational construction grammar.-

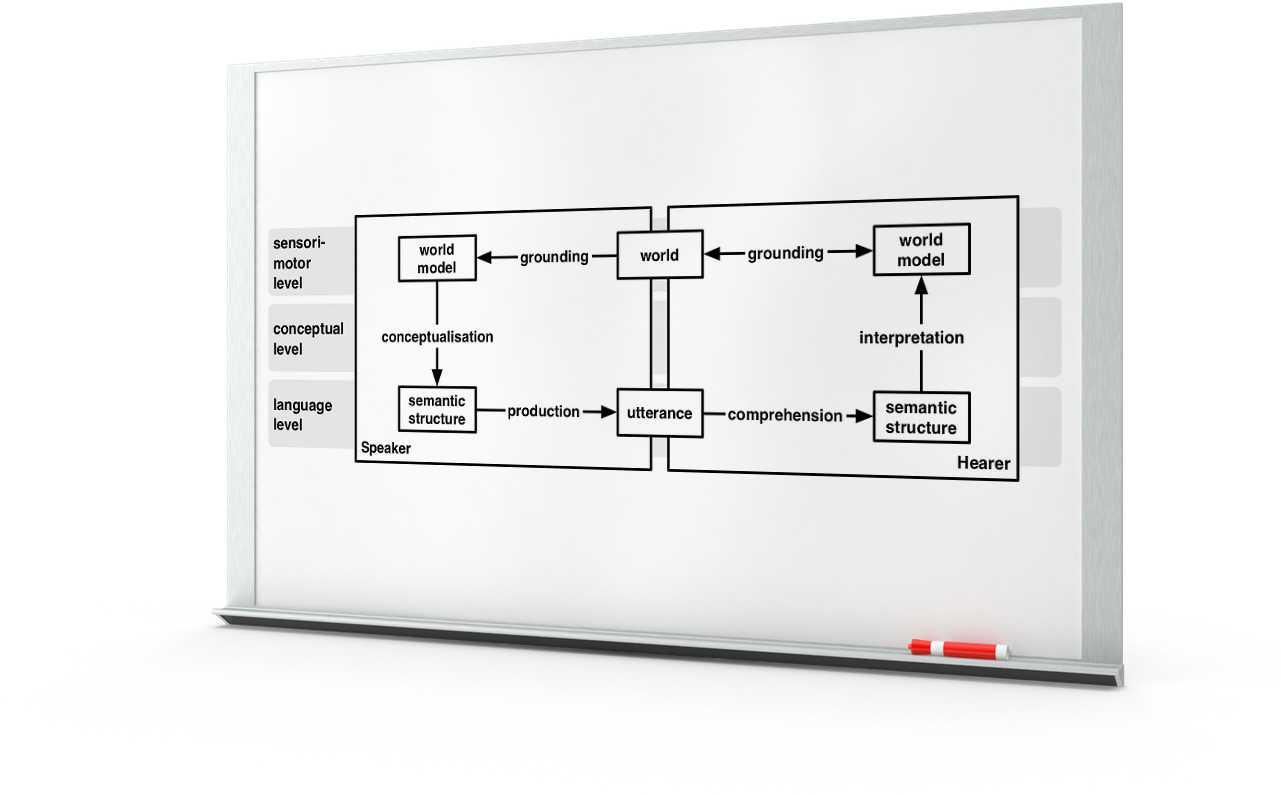

Emergent Communication

Which mechanisms does a population of agents need for constructing conceptual and linguistic structures that are adequate for interacting with, reasoning about and acting in their native environment?

-

Computational Construction Grammar

How can a machine learn the most basic function of natural language, namely that of mapping between rich meaning representations and expressive linguistic utterances?

Featured projects

A selection of exciting research projects that showcase our expertise and provide insight into our long-term research program.-

Computational Construction Grammar: A Practical Introduction

We are working on an introductory textbook that explains in a step-by-step manner how construction grammars can be computationally implemented. The book is at the same time an ideal textbook for courses on construction grammar or computational linguistics, and an indispensable resource for construction grammar researchers who would like to run their analyses on corpora.

-

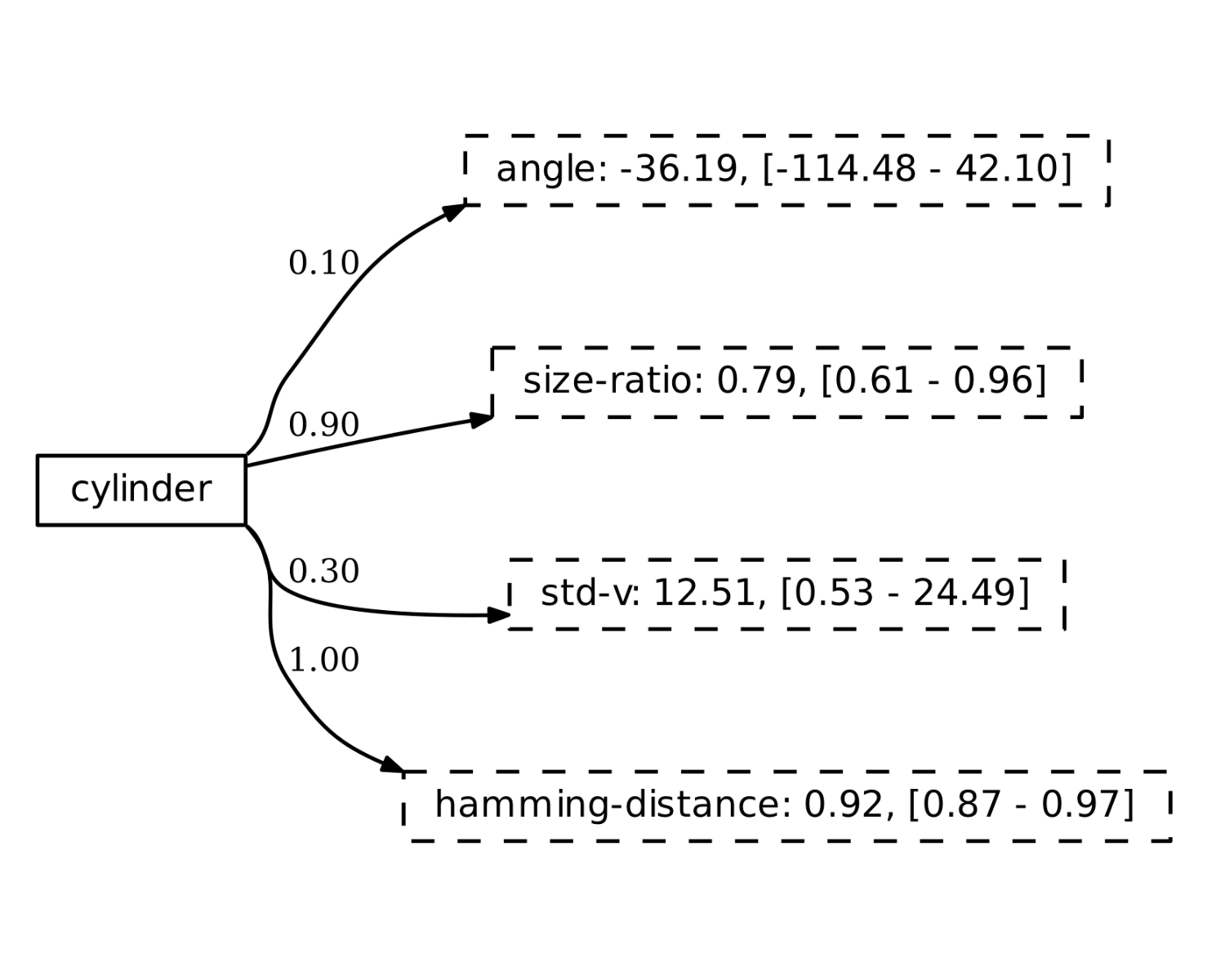

Grounded Concept Learning

This project investigates how autonomous agents can distill meaningful concepts and words from continuous streams of perceptual data. The concepts are constructed through task-based communicative interactions and reflect properties of the world that are relevant for the task. The agents either learn the conceptual system of an existing natural language, or construct their own conceptual system and language.

-

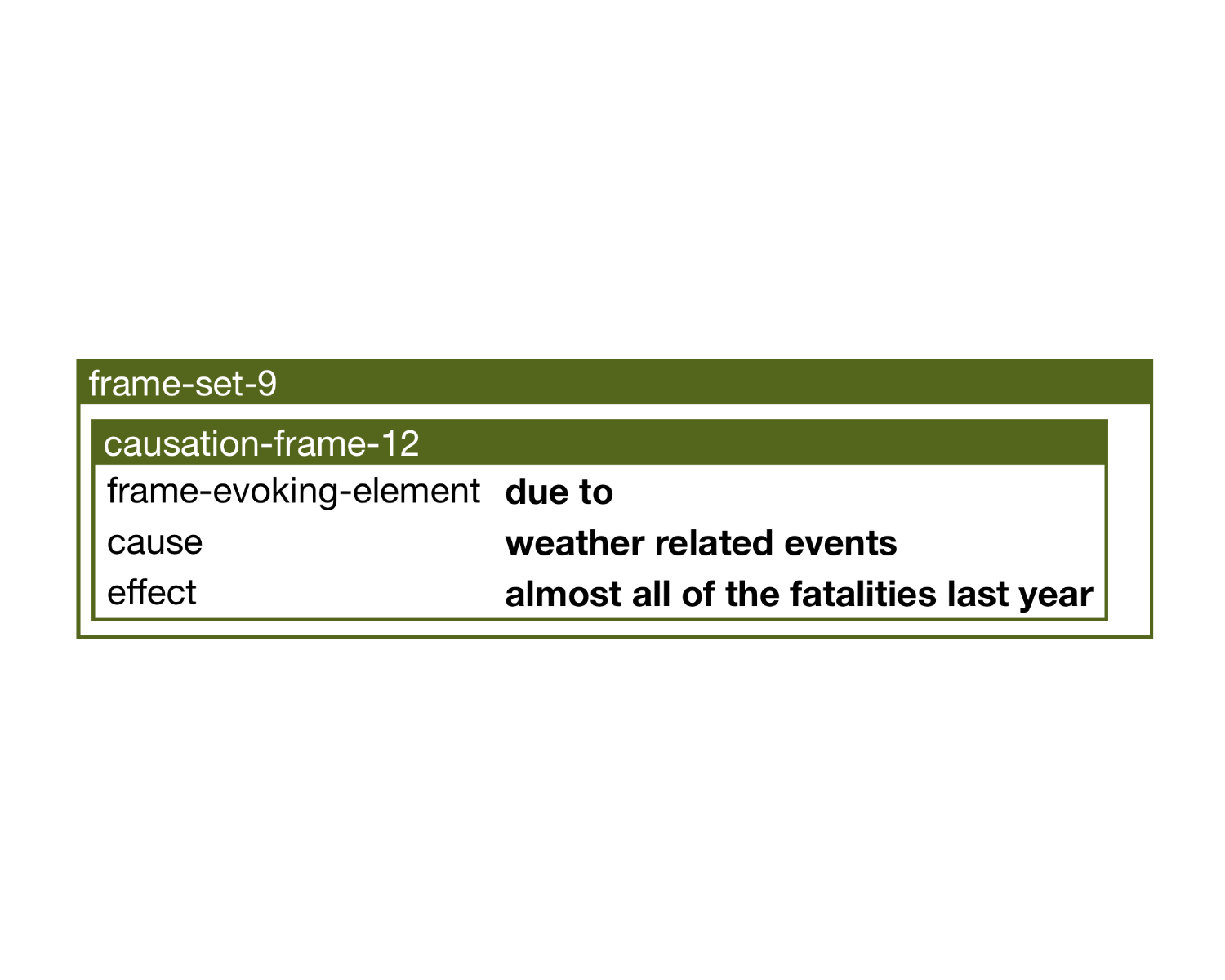

Semantic Frame Extractor

The semantic frame extractor combines dependency parsing with computational construction grammar to extract semantic frames from text corpora. We have applied the newly developed method to a corpus of English newspaper articles, in order to study expressed causal relations in the climate change debate.

-

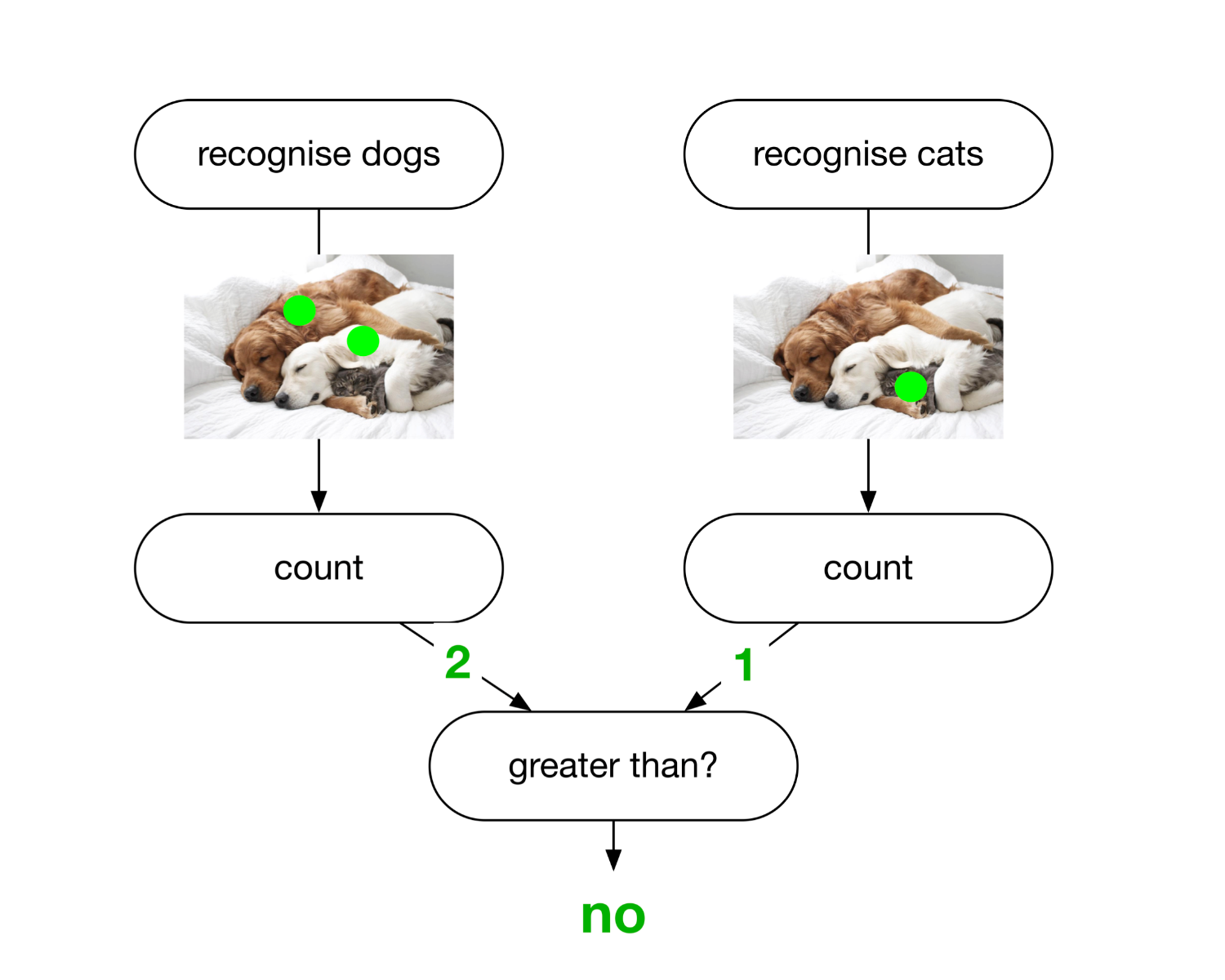

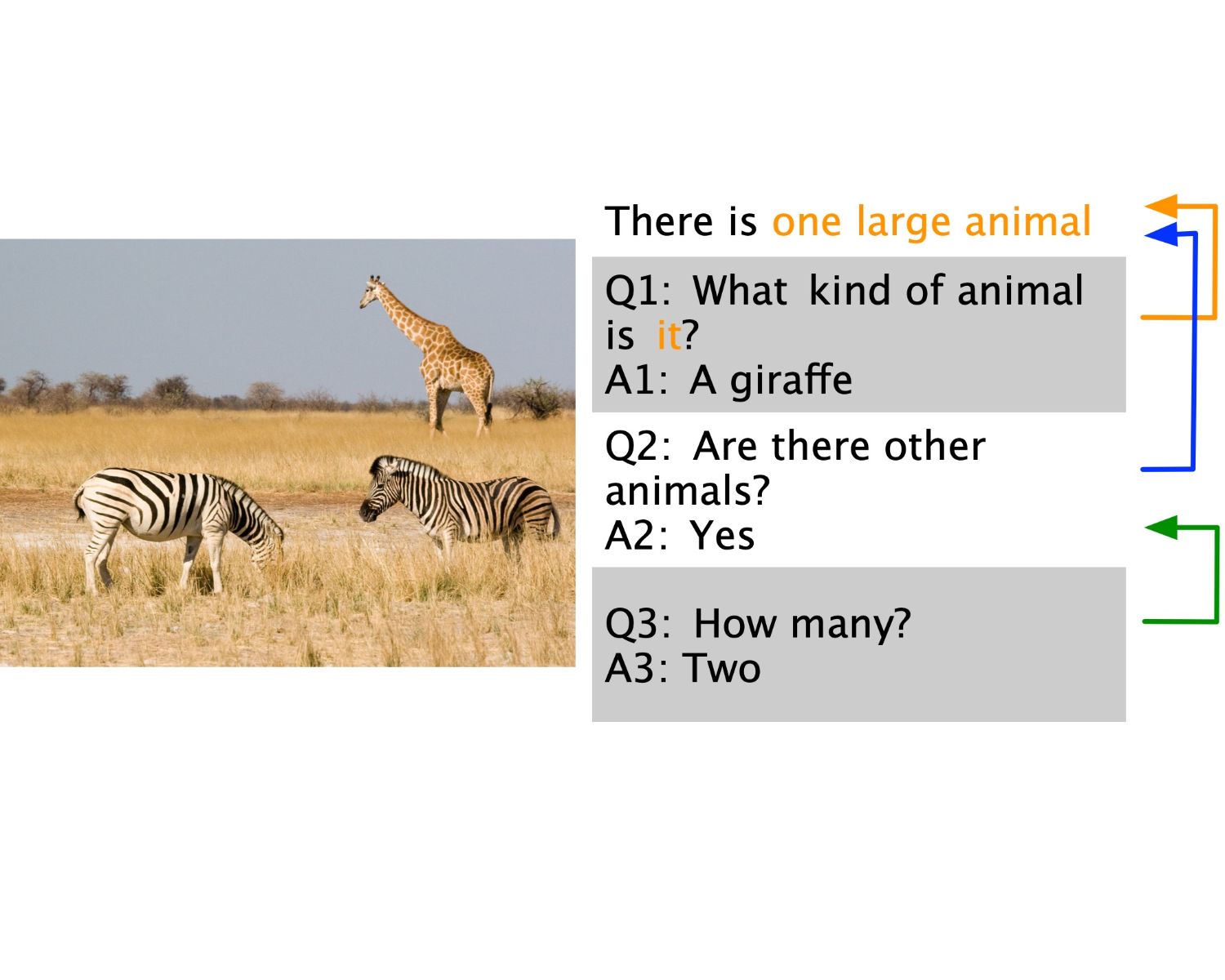

Visual Question Answering

In this project, we apply our language technologies to the problem of visual question answering. The task requires to understand a natural language question, reason about its relation to a given image and answer the question. We adopt a hybrid and modular approach that parses the question into a procedural semantic representation and executes it on the image.

-

Visual Dialog

We extend our hybrid approach to visual question answering to visual dialog tasks. There, the goal is to answer a series of questions that follow each other during a conversation. To keep track of what has been said, we develop techniques to represent, store and query the history of the dialogue. This involves designing a procedural semantics that interfaces with both the image and the dialogue history.

Selected Publications

These publications are representative of the research carried out by the EHAI team. An exhaustive list can be found at the team members' research pages.A Discrimination-Based Strategy for Grounded Concept Learning Jens Nevens, Paul Van Eecke and Katrien BeulsFrontiers in Robotics and AI 7, 2020, 10.3389/frobt.2020.00084

Demos

The following web demonstrations offer more insight into the techniques and methods we develop.Visual Dialog

Demonstration of a grounded conversational agent that answers a series of questions.

View demo ›Visual Question Answering (VQA) on real world images

Demonstration of a hybrid AI system for VQA on real world images using computational construction grammar and executable meaning representations.

View demo ›Grounded Colour Naming Game

Demonstration of the Babel software toolkit with a grounded colour naming game experiment using the new robot interface package.

View demo ›Semantic Parsing for VQA

A computational construction grammar for mapping between natural language questions and their executable meaning representations.

View demo ›Smart Coffee Machine

A voice-activated coffee machine powered by Fluid Construction Grammar.

View demo ›Software

The EHAI team co-develops a number of software tools that are available to the research community.Babel Toolkit

A flexible toolkit for implementing and running agent-based experiments on emergent communicationFluid Construction Grammar

A fully operational processing system for construction grammarsPenelope

An open modular software platform for the data-driven analysis of opinion dynamics in online textual media aimed at social scientistsTeam

-

Prof. dr. Paul Van Eecke

Principal Investigator

-

Dr. Tom Willaert

Postdoctoral researcher

-

Dr. Lara Verheyen

Postdoctoral researcher

-

Jérôme Botoko Ekila

PhD researcher

-

Arno Temmerman

PhD researcher

We're always looking for talented AI researchers to join our interdisciplinary team

Discover our vacancies or get in touch ›